For over a year, I’ve been working with AI to generate recipes for my platform, whippedup.app, I tried different approaches based on machine-learning models and ended up settling with OpenAI’s GPT-3, the same technology that powers the wildly popular ChatGPT. The platform has been live for a while now, and I can see the long-term result of GPT-generated recipes. And, the punchline is, they’re not great. So today, I will explain why and, more broadly, give some intuition why technologies like GPT will unlikely replace creative content creation in the foreseeable future.

GPT for Humans

To start building the intuition for my explanation, first, we have to look at how, roughly, models GPT-3 work. So let’s dive into it.

To use any neural network-based AI, we first have to train it. The process looks like this. First, create a large dataset of examples of what we want the AI to learn from; then build a model that suits the task, and run all the data through until the model learns enough to create good predictions; finally, use the model for a real-life application.

If we go one level deeper into the training process, we can see that during the training phase, we have to run the data multiple times through the model for it to learn. We stop this process when the model produces reasonable results without exactly replicating any of the training examples.

The model learns from the data because it can detect and extract patterns, building an intricate statistical model. This fact will be a crucial piece for my discussion later.

Finally, we say that any AI model is overfitted when it predicts an identical copy of any training example for any given input. Remember also this term for later.

With these fundamentals, let me now talk about how GPT works. GPT stands for Generative Pretrained Transformer. The name tells us many things, but the only important word for non-AI people is generative. Generative means that this particular AI is good at generating content.

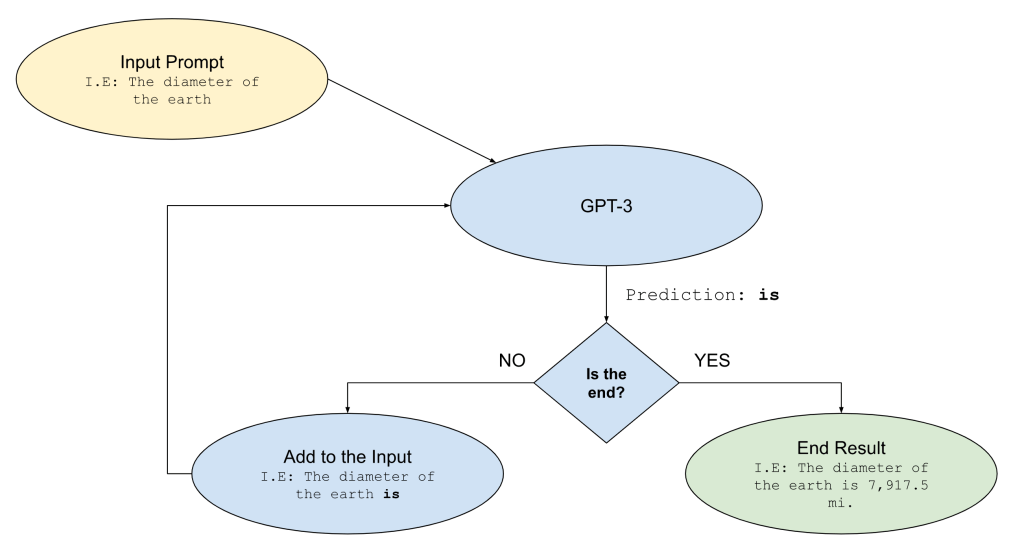

To generate content, GPT requires an initial input prompt. Then it will auto-complete words until it predicts a special ‘end-of-sequence’ token that signals the generation process is over. Let me clarify this with a drawing.

The previous illustration shows the process for a simple sentence. To clarify further how it works, I will explain how GPT-3 can predict the simple sentence “The diameter of the earth is 7,917.5 mi.” from the input “The diameter of the earth.”

| Input | Predicted Word | Is the End? |

|---|---|---|

| The diameter of the earth | is | NO |

| The diameter of the earth is | 7,917.5 | NO |

| The diameter of the earth is 7,917.5 | mi | NO |

| The diameter of the earth is 7,917.5 mi | . | YES |

Finally, GPT will know how to predict this information because its training data was almost all the information available publicly on the internet just before its creators trained it. This fact has some profound implications:

- The generated information will be legitimate most of the time, but not all. Still, nothing guarantees it will be. For example, if one of the training examples was an entry from Wikipedia talking about the earth, and another was a forum thread of the flat earth society. Then, the AI could have confidently outputted that the planet has no diameter because it’s flat.

- The generated information can replicate human biases. Great work from the AI community is to help identify these biases and correct the input data, so the model doesn’t replicate them.

- The generated information has no human-level reasoning behind it. It’s just how statistically likely all the words can appear together, and that’s it.

- The generated information is not entirely new. This fact comes from how it’s generated; entire sentences could be exact replicas of blog posts, Reddit threads, or anything else in between.

Why is GPT not Good for Cooking Recipes?

Given how this technology works, we can now say why it produces impressive results from a researcher’s perspective but not a home cook’s. The scientific miracle is having a piece of technology so intricate and refined that it can extract nuances of human writing in any language and replicate many characteristics. These characteristics include understanding the meaning of text and structure. Therefore, ChatGPT can replicate, for example, human conversations without specific training and output text in any format.

The problematic aspect of recipes is that they explain the process of cooking something by enumerating chefs’ steps, given their experience, personal preference, and culinary knowledge. This cooking process is inherently subjective, and it’s even extremely hard for humans not trained in culinary techniques to pick up the nuances and intentions behind the chef’s reasoning. Some steps may be there for texture or the final product to elicit a certain feeling or memory. None of that is usually apparent to the recipe’s reader, it can only be felt after tasting.

It also has a mathematical aspect to it. Quantities of ingredients have a special meaning, and different recipes will call for various amounts of the same ingredient in the same context to achieve diverse effects. One easy example is mixing butter and flour to thicken a sauce or to make a roux.

Given that the technology understands the statistical properties of the text but not the underlying mathematical context, generated recipes will have two major flaws:

- Quantities of ingredients may look real but not necessarily make sense for the recipe.

- Steps involved may work but not follow the right rationale for the recipe.

These flaws match what I observed in the recipes my platform generated, and it summarizes the critiques that multiple chefs do over the Chat-GPT-generated recipes they tested.

It would take an overfit GPT to generate something great by replicating one of the training examples and thus be mere plagiarism.

Where is it Safe to Use GPT, and Where Not?

GPT carries the great promise of unlimited content generation, but as we saw, we can’t trust this content. Still, GPT is a great technology that’s helpful for many uses. In general, as long as your text doesn’t have to be accurate or have a complex logic behind it, you should be alright; this includes the following applications:

- Creative ideas generation. Humans also get creative by combining previously existing ideas in novel ways. GPT will excel at this task.

- Simple Q&A and trivia. With the understanding that not all content might be true.

- Simple chatbots. For example, support service centers can usually help their customers find answers without human intervention.

- Simple translation and grammar-checking tasks.

- Template content generation.

In general, GPT can do OK in many other tasks, but sometimes the price of being wrong is too high, for example, getting a bad reputation for your business or even worse.

In Summary

GPT-3 and ChatGPT is an impressive machine learning model that enables many tools to empower creativity and can perform many natural language tasks very well. Even more impressive is that it can do this as a zero-shot, with its pretraining only. There are, however, many applications where it will not perform better or close to humans; these are in areas where the reasoning behind the structure of the text cannot be inferred directly from it, as in cooking recipes or areas that require scientific rigor.

To learn more about ChatGPT, you can visit open AI’s website. Please leave any questions or comments in the section below.

Leave a comment