Last year, 2022, was a big year for me in terms of personal and professional growth. First, I began the year diving into Machine Learning. Then I completed several specialization courses, read a textbook, and countless research papers.

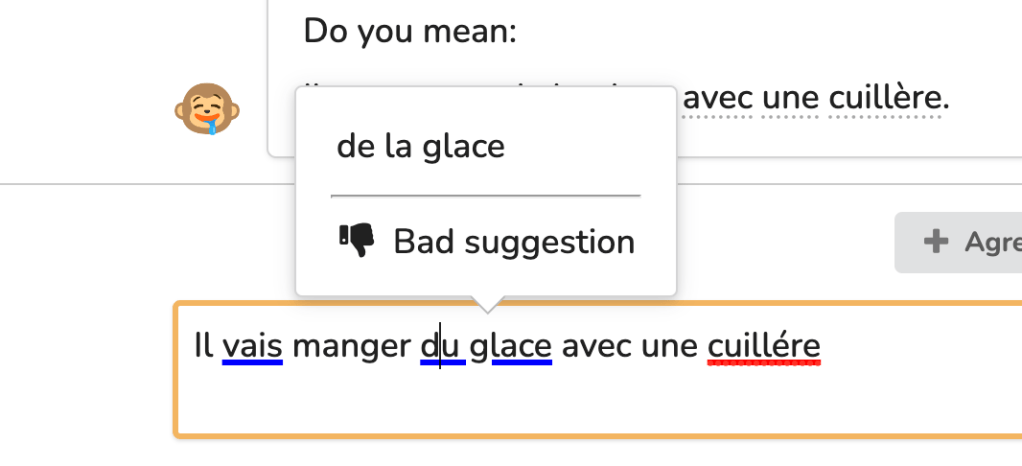

My good friend Dan created his language learning platform, “Squidgies.” This platform heavily leverages machine learning techniques to produce virtually unlimited content for language learners. In addition, I contributed a grammar correction model for multiple languages to this platform. I will walk you through this effort’s process in today’s blog post.

Model Architecture

The problem of GEC is not new. I still remember in the 1990s when Microsoft Word attempted to correct my grammar and did what looked like a good job at the time. Since then, there have been significant advances in this technology thanks to companies like Grammarly or Language Tool.

Many of the papers I read, including some from researchers at Microsoft, used variations of RNNs, such as LSTM, to train GEC models, but I decided to choose a Transformer model. For those unfamiliar with Natural Language Processing, the Transformer architecture was proposed in 2018 by engineers at Google to overcome many of the limitations that LSTMs (and broadly all variations of Recurrent Neural Networks) have: Parallel processing.

I choose the T5 model, an Encoder/Decoder Transformer. I chose T5 because it produces decent results in many natural language processing tasks without architectural changes. All you have to do is frame your problem as a translation problem. Since the problem involved multiple languages, I used Google’s mT5 model.

Another contender in my choice was BERT. Grammarly Researchers experimented with this model in their Gector paper by framing the problem as token tagging, but this approach didn’t seem appropriate for multiple languages.

The Dataset

As anyone in Machine Learning knows, your model will perform as well to the extent your data is good. I read several research papers on this topic to understand how to source the data, and many researchers agreed that you could achieve state-of-the-art results with a synthetic dataset.

My first approach to generating a synthetic dataset was to write python code that took supposedly grammatical sentences and apply transformations to these sentences to introduce typical grammar errors. I hand-coded many transformations that mimicked common language-learner mistakes I researched on the internet and conversed with some friends that are language teachers.

Benchmarks

One of my biggest challenges was to measure progress. Initially, I tried to use the SacreBleu metric, but this is not ideal for Grammar corrections as the system can fix one mistake in different ways, and all of these are correct. Let me illustrate:

Sentence to correct: “could use your help cleaning.”

Candidate solution: “I could use your help cleaning.”

Alternative solutions: “He could…”, “She could…”, “They could…”, “Someone could…” and so forth.

If I relied on BLEU, I would get lower scores than I should, just because the system chose an alternative solution. However, I could use this metric with sentences in multiple languages because it’s language-agnostic.

An alternative metric is the M2 used by the CoNNL-14 and BEA benchmark datasets. This metric uses ERRANT to give Precision, Recall, and F0.5, but the downside is that you ought to have a benchmark dataset for each language you want to test.

I decided to use the latter and assumed that the performance for the English language would be a good gauge for the rest.

Refinements to the Dataset

As I progressed and got better results, it became evident that I couldn’t hand-code every possible grammar mistake people made. That was when I stumbled upon a beautiful idea from the M4-GEC synthetic dataset paper: train a machine learning model to learn what errors the learners make and then use this to augment my data.

The idea was great because I only needed the history from Squidgies and its Multi-Language ERRANT implementation to tag the mistakes and then train a T5 model to replicate the error in grammatical sentences. This new approach allowed me to expand my synthetic dataset and project errors’ frequencies by ERRANT tag. Then, I could help my final model better predict the most likely solution when there are multiple possible correct solutions.

Training

Finally, I was ready to train my final T5 model! Again, by reading research papers and lots of trial and error, I ended up with the following stages:

- Basic Training (Task Adaptation): I used my synthetic dataset to fine-tune mT5, around five epochs, until I saw the loss going down very slowly.

- Fine Tune with Unchanged Sentences: We noticed that sometimes the model would change a correct sentence into another correct sentence. So we added a fine-tuning stage where we would feed the model correct sentences to correct and penalize it if it changed them.

- Fine Tune with Production Data: Finally, we compiled a manual dataset with Squidgies production data and fine-tuned the model. This ensured the model would perform better for our specific use case.

Optimization for Production

After we were happy with the results, we had to optimize the model for production for quick inference and deployment size. We performed the following optimizations:

- Cleared the model of unused languages: mT5 has many more languages than the ones we were interested in, so we trimmed the model to remove all of these. Surprisingly we didn’t notice any impact on performance. This step reduced the size of the model by 60%.

- Quantization of the weights: Since T5 generates one token at a time, longer sentences will take significantly longer to correct than shorter ones. We mitigated this performance issue by quantizing the weights. Quantization gave us up to 4x performance improvement on inference times with unnoticeable inference performance decreases.

Conclusion and Resources

After some time, Dan and I agreed that it would be nice to open-source the synthetic dataset so the community would benefit from it. So, I made it available under the Apache-2 license at Hugging Face.

The experience of taking this large machine-learning model from inception to production helped me grow and mature my understanding of what machine learning can do. This was the journey’s first step but certainly the most significant one. Please check out the Squidgies platform and get in touch if you have questions or comments about this process or want to contribute.

Leave a comment